Research

Research Summary

I am interested in the computational modeling of the visual perception of human. The central question in my research is the representation, which means more than vectorization-and-classification. The right representations must reflect the general reduction of visual information.

Instead of designing an algorithm to fit a particular application scenario, I am fascinated by the "imperfect" design of our visual system. I believe that our visual system is so perfectly designed so that behind the seemingly flawed structures/functions hide a treasury that transcends our humble intelligence.

I believe the power of AAI ("Artificial" Artificial Intelligence), which brings human power into the processing loop. I am also interested in further enhance such power by designing algorithms to aggregate human labeling in a more efficient way.

Recent Interests

- Active learning

- Human Computation

- Sparse Signal Analysis

- Non-parametric deep models

Benchmark v.s. Algorithms

Boundary Detection Benchmarking: Beyond F-Measures (CVPR 2013) identifies potential pitfalls of boundary detection benchmarks to an algorithm.

With extensive analysis we have reached alarming observations:

- Today's evaluation protocol has a "precision bonus" that favors weak boundaries

- Algorithm output has EXTREMELY WEAK correlations with perceptual strength of the boundary.

- NONE of today's well-known algorithms can detect "strong boundaries" significantly better than random.

- Some "salient boundary" detector performs poorly in our evaluation.

- The best way to utilize a boundary detector is to adopt a low threshold and live with false alarms.

- Top performing algorithms have passed the Restricted Turing Test in terms of misses under low thresholds.

Benchmarking A Boundary Detection Benchmark

A meta-theory of boundary detection benchmarks (NIPS 2012 Workshop) analyzes the behavior of human annotators in boundary detection benchmark.

In this paper we discuss the system noise of human labeling in the ground-truth data of a benchmark.

We bring up the issue of "boundary strength", and proposed a graphical model to infer this latent variable.

The poster of this paper can be found here

This paper receives the Best Presentation Award at NIPS 2012 Workshop: Human Computation for Science and Computational Sustainability

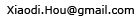

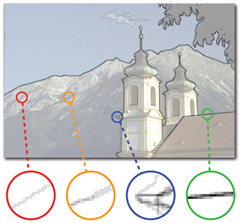

Image Signature

We analyze the situation where a sparse signal is mixed with a regular signal. We show that the image signature is a good descriptor for sparse signals, which support can be approximately recovered using extremely simple operations.

The MATLAB scripts for benchmarking is here.

Co-authors

- Prof. Liqing Zhang

- Bolei Zhou

- Jonathan Harel

- Prof. Christof Koch

- Prof. Fanji Gu

- Prof. Alan Yuille

- Yin Li

Brain-like computing, signal processing

Computer vision, social computing

fMRI signal analysis, intermediate feature analysis, object recognition

Consciousness, neuroscience, computational modeling, biophysics

Neuroscience

Computer vision

Computer vision, optimization